Visualizing Risky Words — Part 2

Want to share your content on R-bloggers? click here if you have a blog, or here if you don't.

This is a follow-up to my Visualizing Risky Words post. You’ll need to read that for context if you’re just jumping in now. Full R code for the generated images (which are pretty large) is at the end.

Aesthetics are the primary reason for using a word cloud, though one can pretty quickly recognize what words were more important on well crafted ones. An interactive bubble chart is a tad better as it lets you explore the corpus elements that contained the terms (a feature I have not added yet).

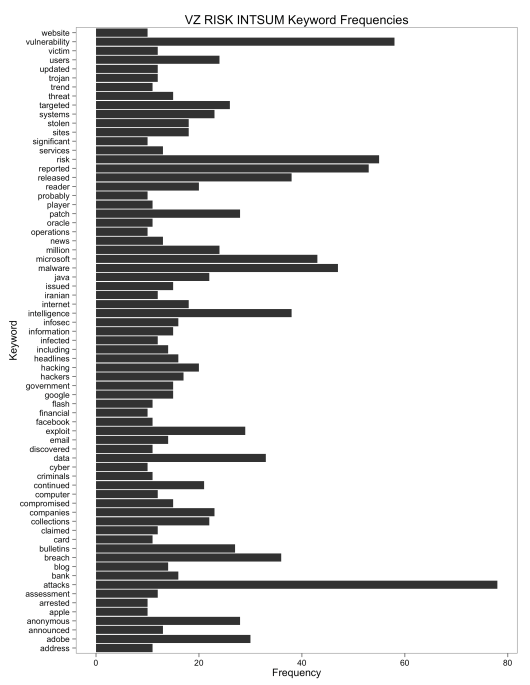

I would posit that a simple bar chart can be of similar use if one is trying to get a feel for overall word use across a corpus:

(click for larger version)

It’s definitely not as sexy as a word cloud, but it may be a better visualization choice if you’re trying to do analysis vs just make a pretty picture.

If you are trying to analyze a corpus, you might want to see which elements influenced the term frequencies the most, primarily to see if there were any outliers (i.e. strong influencers). With that in mind, I took @bfist’s corpus and generated a heat map from the top terms/keywords:

(click for larger version)

There are some stronger influencers, but there is a pattern of general, regular usage of the terms across each corpus component. This is to be expected for this particular set as each post is going to be talking about the same types of security threats, vulnerabilities & issues.

The R code below is fully annotated, but it’s important to highlight a few items in it and on the analysis as a whole:

- The extra, corpus-specific stopword list : “week”, “report”, “security”, “weeks”, “tuesday”, “update”, “team” : was designed after manually inspecting the initial frequency breakdowns and inserting my opinion at the efficacy (or lack thereof) of including those terms. I’m sure another iteration would add more (like “released” and “reported”). Your expert view needs to shape the analysis and—in most cases—that analysis is far from a static/one-off exercise.

- Another area of opine was the choice of

0.7in theremoveSparseTerms(tdm, sparse=0.7)call. I started at0.5and worked up through0.8, inspecting the results at each iteration. Playing around with that number and re-generating the heatmap might be an interesting exercise to perform (hint). - Same as the above for the choice of

10insubset(tf, tf>=10). Tweak the value and re-do the bar chart vis! - After the initial “ooh! ahh!” from a word cloud or even the above bar chart (though, bar charts tend to not evoke emotional reactions) is to ask yourself “so what?”. There’s nothing inherently wrong with generating a visualization just to make one, but it’s way cooler to actually have a reason or a question in mind. One possible answer to a “so what?” for the bar chart is to take the high frequency terms and do a bigram/digraph breakdown on them and even do a larger cross-term frequency association analysis (both of which we’ll do in another post)

- The heat map would be far more useful as a D3 visualization where you could select a tile and view the corpus elements with the term highlighted or even select a term on the Y axis and view an extract from all the corpus elements that make it up. That might make it to the TODO list, but no promises.

I deliberately tried to make this as simple as possible for those new to R to show how straightforward and brief text corpus analysis can be (there’s less than 20 lines of code excluding library imports, whitespace, comments and the unnecessary expansion of some of the tm function calls that could have been combined into one). Furthermore, this is really just a basic demonstration of tm package functionality. The post/code is also aimed pretty squarely at the information security crowd as we tend to not like examples that aren’t in our domain. Hopefully it makes a good starting point for folks and, as always, questions/comments are heartily encouraged.

# need this NOAWT setting if you're running it on Mac OS; doesn't hurt on others

Sys.setenv(NOAWT=TRUE)

library(ggplot2)

library(ggthemes)

library(tm)

library(Snowball)

library(RWeka)

library(reshape)

# input the raw corpus raw text

# you could read directly from @bfist's source : http://l.rud.is/10tUR65

a = readLines("intext.txt")

# convert raw text into a Corpus object

# each line will be a different "document"

c = Corpus(VectorSource(a))

# clean up the corpus (function calls are obvious)

c = tm_map(c, tolower)

c = tm_map(c, removePunctuation)

c = tm_map(c, removeNumbers)

# remove common stopwords

c = tm_map(c, removeWords, stopwords())

# remove custom stopwords (I made this list after inspecting the corpus)

c = tm_map(c, removeWords, c("week","report","security","weeks","tuesday","update","team"))

# perform basic stemming : background: http://l.rud.is/YiKB9G

# save original corpus

c_orig = c

# do the actual stemming

c = tm_map(c, stemDocument)

c = tm_map(c, stemCompletion, dictionary=c_orig)

# create term document matrix : http://l.rud.is/10tTbcK : from corpus

tdm = TermDocumentMatrix(c, control = list(minWordLength = 1))

# remove the sparse terms (requires trial->inspection cycle to get sparse value "right")

tdm.s = removeSparseTerms(tdm, sparse=0.7)

# we'll need the TDM as a matrix

m = as.matrix(tdm.s)

# datavis time

# convert matri to data frame

m.df = data.frame(m)

# quick hack to make keywords - which got stuck in row.names - into a variable

m.df$keywords = rownames(m.df)

# "melt" the data frame ; ?melt at R console for info

m.df.melted = melt(m.df)

# not necessary, but I like decent column names

colnames(m.df.melted) = c("Keyword","Post","Freq")

# generate the heatmap

hm = ggplot(m.df.melted, aes(x=Post, y=Keyword)) +

geom_tile(aes(fill=Freq), colour="white") +

scale_fill_gradient(low="black", high="darkorange") +

labs(title="Major Keyword Use Across VZ RISK INTSUM 202 Corpus") +

theme_few() +

theme(axis.text.x = element_text(size=6))

ggsave(plot=hm,filename="risk-hm.png",width=11,height=8.5)

# not done yet

# better? way to view frequencies

# sum rows of the tdm to get term freq count

tf = rowSums(as.matrix(tdm))

# we don't want all the words, so choose ones with 10+ freq

tf.10 = subset(tf, tf>=10)

# wimping out and using qplot so I don't have to make another data frame

bf = qplot(names(tf.10), tf.10, geom="bar") +

coord_flip() +

labs(title="VZ RISK INTSUM Keyword Frequencies", x="Keyword",y="Frequency") +

theme_few()

ggsave(plot=bf,filename="freq-bars.png",width=8.5,height=11) |

R-bloggers.com offers daily e-mail updates about R news and tutorials about learning R and many other topics. Click here if you're looking to post or find an R/data-science job.

Want to share your content on R-bloggers? click here if you have a blog, or here if you don't.