Develop Performance Benchmark with GRNN

Want to share your content on R-bloggers? click here if you have a blog, or here if you don't.

It has been mentioned in https://github.com/statcompute/GRnnet that GRNN is an ideal approach employed to develop performance benchmarks for a variety of risk models. People might wonder what the purpose of performance benchmarks is and why we would even need one at all. Sometimes, a model developer had to answer questions about how well the model would perform even before completing the model. Likewise, a model validator also wondered whether the model being validated has a reasonable performance given the data used and the effort spent. As a result, the performance benchmark, which could be built with the same data sample but an alternative methodology, is called for to address aforementioned questions.

While the performance benchmark can take various forms, including but not limited to business expectations, industry practices, or vendor products, a model-based approach should possess following characteristics:

– Quick prototype with reasonable efforts

– Comparable baseline with acceptable outcomes

– Flexible framework without strict assumptions

– Practical application to broad domains

With both empirical and conceptual advantages, GRNN is able to accommodate each of above-mentioned requirements and thus can be considered an appropriate candidate that might potentially be employed to develop performance benchmarks for a wide variety of models.

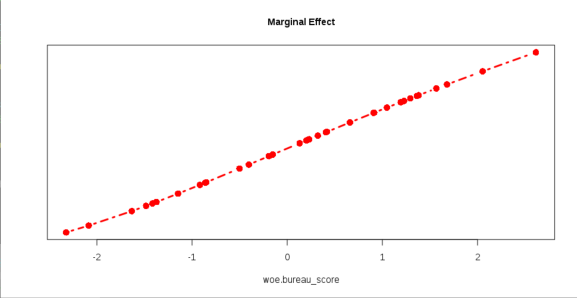

Below is an example illustrating how to use GRNN to develop a benchmark model for the logistic regression shown in https://statcompute.wordpress.com/2019/05/04/why-use-weight-of-evidence/. The function grnn.margin() was also employed to explore the marginal effect of each attribute in a GRNN.

R-bloggers.com offers daily e-mail updates about R news and tutorials about learning R and many other topics. Click here if you're looking to post or find an R/data-science job.

Want to share your content on R-bloggers? click here if you have a blog, or here if you don't.