Comparing the Bradley Terry model to betting odds

Want to share your content on R-bloggers? click here if you have a blog, or here if you don't.

In this previous post, I described the Bradley-Terry model and showed how we could use it to predict game outcomes in the NBA 2018-19 regular season. After ffitting the Bradley-Terry model on the first half of the regular season (with and without home advantage), I used the model to predict win probabilities for the second half of the season. The models gave test Brier scores of 0.213 and 0.219, which I said was no longer than random 50-50 guessing (which has a Brier score of 0.25).

In private correspondence, Justin Dyer pointed out to me that it’s not clear a priori that Brier scores of ~0.21 are bad: we should benchmark the scores against other models, or the Brier scores implied by bookmaking odds. Presumably bookmaking odds incorporate a lot more information than the simple Bradley-Terry model above (e.g. injuries, team’s recent form).

In this post, we compare the Bradley-Terry model against betting odds for that same data. All the code in this post can be found in one script here.

Getting the data

Betting odds data was obtained from here and games data was obtained from here.

First, import the packages that we will use:

library(DBI) library(plotROC) library(readr) library(tidyverse)

Next, we pull out the relevant game data from the SQLite database:

seasonYear <- 2018 # represents 2018-2019 season

# this filepath specific to my local drive

mainFile <- "basketball.sqlite"

# get all regular season games (only relevant columns

# selected)

mydb <- dbConnect(RSQLite::SQLite(), mainFile)

df <- dbGetQuery(mydb, "SELECT * FROM Game")

dbDisconnect(mydb)

season_df <- df %>% mutate(GAME_DATE = as.Date(GAME_DATE),

SEASON = as.numeric(SEASON)) %>%

filter(SEASON == seasonYear) %>%

select(GAME_DATE, TEAM_NAME_HOME, TEAM_NAME_AWAY, WL_HOME) %>%

arrange(GAME_DATE)

head(season_df)

# GAME_DATE TEAM_NAME_HOME TEAM_NAME_AWAY WL_HOME

# 1 2018-10-16 Golden State Warriors Oklahoma City Thunder W

# 2 2018-10-16 Boston Celtics Philadelphia 76ers W

# 3 2018-10-17 San Antonio Spurs Minnesota Timberwolves W

# 4 2018-10-17 New York Knicks Atlanta Hawks W

# 5 2018-10-17 Phoenix Suns Dallas Mavericks W

# 6 2018-10-17 LA Clippers Denver Nuggets L

After that, we pull out the relevant betting odds data. The data frame contains several different types of odds as well as odds from different bookmakers. We use the Average_Line_ML column, representing the average moneyline bet from the bookmakers in the dataset. In the last line, we convert the moneyline odds to win probability (see this website for more details).

# this filepath specific to my local drive

vegas <- read_csv("vegas.txt")

bet_df <- vegas %>% filter(Location == "home") %>%

select(Date, Team, OppTeam, line = Average_Line_ML) %>%

mutate(winProb = ifelse(line < 0, -line / (100 - line), 100 / (100 + line)))

head(bet_df)

# # A tibble: 6 × 5

# Date Team OppTeam line winProb

# <date> <chr> <chr> <dbl> <dbl>

# 1 2018-10-16 Boston Philadelphia -210. 0.678

# 2 2018-10-16 Golden State Oklahoma City -1015. 0.910

# 3 2018-10-17 Charlotte Milwaukee 145 0.408

# 4 2018-10-17 Detroit Brooklyn -242. 0.708

# 5 2018-10-17 Indiana Memphis -294. 0.746

# 6 2018-10-17 Orlando Miami 116 0.463

Join the two datasets together:

season_teams <- sort(unique(season_df$TEAM_NAME_HOME))

bet_teams <- sort(unique(bet_df$Team))

names(bet_teams) <- season_teams

df <- season_df %>%

transmute(Date = GAME_DATE,

Team = bet_teams[TEAM_NAME_HOME],

OppTeam = bet_teams[TEAM_NAME_AWAY],

HomeWinLoss = WL_HOME) %>%

left_join(bet_df)

For some reason, 4 of the games did not have betting odds data. We impute a win probability of 0.5 to them (i.e. no information, guess a 50-50 coin flip):

df$winProb[is.na(df$winProb)] <- 0.5

Fitting the Bradley-Terry model

The chunk of code below fits the Bradley-Terry model (with and without home advantage) on the training data. This is an abbreviated version of the code in the previous post; go there if you would like more detail.

# Get data in a form suitable for the BT model

get_data_vec <- function(home_team, away_team, teams) {

vec <- rep(0, length(teams))

vec[teams == home_team] <- 1

vec[teams == away_team] <- -1

vec

}

X <- apply(df, 1,

function(row) get_data_vec(row["Team"],

row["OppTeam"],

bet_teams))

X <- t(X)

colnames(X) <- bet_teams

dim(X)

y <- as.numeric(df$HomeWinLoss == "W")

bt_df <- as.data.frame(cbind(X, y))

# split into train and test

n_train <- nrow(X) / 2

train_df <- bt_df[1:n_train, ]

test_df <- bt_df[(n_train + 1):nrow(X), ]

# train BT models

train_mod_home <- glm(y ~ ., data = train_df, family = binomial())

train_mod_no_home <- glm(y ~ . + 0, data = train_df, family = binomial())

Comparing predictions

We get the predictions for the models and betting odds on the test set:

pred_home <- predict(train_mod_home, newdata = test_df, type = "response") pred_no_home <- predict(train_mod_no_home, newdata = test_df, type = "response") pred_bet <- df$winProb[(n_train + 1):nrow(X)]

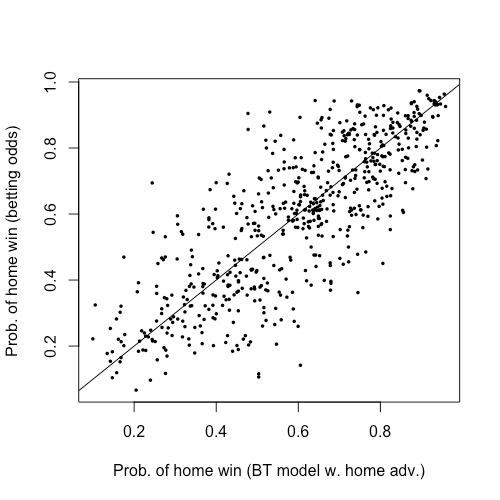

Before comparing the models on test performance, let’s have a look at the predictions themselves. The code below plots the win probability predictions from betting odds against those from the Bradley-Terry model with home advantage.

plot(pred_home, pred_bet, pch = 16, cex = 0.5,

xlab = "Prob. of home win (BT model w. home adv.)",

ylab = "Prob. of home win (betting odds)")

abline(0, 1)

It looks like there is quite a bit of variation between the two but they generally give the same directional outcome (i.e. they would predict the same team winning).

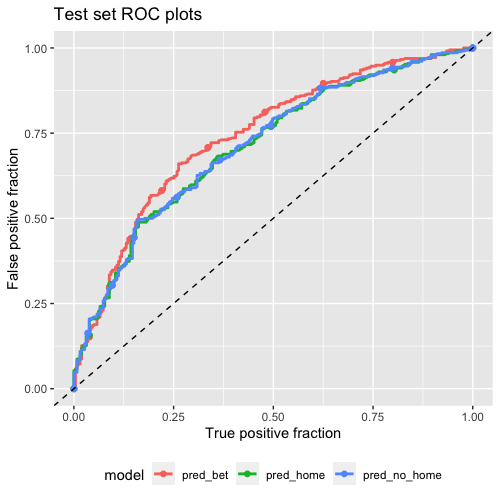

Now for test performance. The code below plots the test ROC curves for the 3 models:

pred_df <- data.frame(

truth = test_df$y,

pred_home = pred_home,

pred_no_home = pred_no_home,

pred_bet = pred_bet

) %>%

pivot_longer(pred_home:pred_bet,

names_to = "model",

values_to = "prediction")

roc_plot <- ggplot(pred_df) +

geom_roc(aes(d = truth, m = prediction, color = model), labels = FALSE)

roc_plot +

labs(x = "True positive fraction",

y = "False positive fraction",

title = "Test set ROC plots") +

geom_abline(slope = 1, intercept = 0, linetype = "dashed") +

theme(legend.position = "bottom")

From the ROC curve plot, betting odds (red line) clearly outperform the two Bradley-Terry models, but not by much! Finally, let’s compute the test AUCs and Brier scores:

# compute AUC calc_auc(roc_plot) # PANEL group AUC # 1 1 1 0.7421478 # 2 1 2 0.7120678 # 3 1 3 0.7133042 # compute Brier score mean((pred_bet - test_df$y)^2) # [1] 0.2027192 mean((pred_home - test_df$y)^2) # [1] 0.2130266 mean((pred_no_home - test_df$y)^2) # [1] 0.2186777

Betting odds has a better test Brier score, but only marginally: 0.203 vs. 0.213 & 0.219! This is quite surprising in that the Bradley-Terrry model is so simple and uses much less information than betting odds, yet its performance is not too far off.

R-bloggers.com offers daily e-mail updates about R news and tutorials about learning R and many other topics. Click here if you're looking to post or find an R/data-science job.

Want to share your content on R-bloggers? click here if you have a blog, or here if you don't.