Twitter coverage of the ISMB 2012 meeting: some statistics

Want to share your content on R-bloggers? click here if you have a blog, or here if you don't.

OK, let’s do this: some statistics and visualization of the tweets for ISMB 2012.

First, thanks to Stephen Turner who got things started in this post at his excellent blog, Getting Genetics Done. Subscribe to his feed if you don’t already do so.

I’ve created a Github repository for this project (and future Twitter-related work). If you’d like to reproduce the analyses (or skip reading this post), there’s a Sweave file and the resulting PDF. The Sweave file contains a relative path to the data file, so to run the analysis you’ll need to clone the repository, cd to ismb/code/sweave and run R CMD Sweave ismb.Rnw from there.

1. Getting the data from Twitter

Much as described by Stephen, I grabbed the relevant tweets like this:

ismb1 <- searchTwitter("#ISMB", n = 1500, since = "2012-07-13", until = "2012-07-13")

creating lists ismb1, ismb2…ismb5 for days 1-5 (July 13-17) of the meeting, by editing the since/until dates as appropriate.

Next, I wrote this ugly hack which loads the 5 saved lists, then uses the twListToDF() function from the twitteR package to merge them into one data frame, called ismb. That’s saved here as ismb.RData or if you prefer, as CSV.

Note: I assume that all tweets in this data are from public timelines and as such, the authors do not object to mining and archiving. If that’s not the case and you don’t want me to store your Twitter handle at Github, let me know.

A quick check that all tweet IDs are unique…yes, they are and there we go: 3 162 tweets with the #ISMB hashtag, ready for analysis.

Note that the following code snippets require these libraries: ggplot2, xtable, RColorBrewer, tm, wordcloud and sentiment.

2. Tweets per day

ismb$date <- as.Date(ismb$created)

byDay <- as.data.frame(table(ismb$date))

colnames(byDay) <- c("date", "tweets")

print(ggplot(byDay) + geom_bar(aes(date, tweets), fill = "salmon") + theme_bw() + opts(title = "ISMB 2012 tweets per day"))

|

This is pretty straightforward, since we can use as.Date() to convert tweet timestamp to date, then sum tweets by date using table():

Several hundred tweets per day; quite a reasonable rate, especially on days 3-5 which is when the main event (ISMB “proper”) took place. |

3. Tweets per hour by day

ismb$hour <- as.POSIXlt(ismb$created)$hour

byDayHour <- as.data.frame(table(ismb$date, ismb$hour))

colnames(byDayHour) <- c("date", "hour", "tweets")

byDayHour$hour <- as.numeric(as.character(byDayHour$hour))

print(ggplot(byDayHour) + geom_bar(aes(hour, tweets), fill = "salmon", binwidth = 1) + facet_grid(date ~ hour) + opts(axis.text.x = theme_blank(), axis.ticks = theme_blank(), panel.background = theme_blank(), title = "ISMB 2012 tweets per hour by day"))

|

I’m not convinced that this code or the resulting plot are exactly what I was trying to achieve, but it’s probably close enough.

This code uses a ggplot facet_grid to display hourly tweets (horizontally) across 5 days (vertically). Twitter uses UTC for timestamps, whilst ISMB took place in Long Beach, California, which is UTC – 8 (or currently, UTC – 7 due to daylight saving). This explains why the majority of tweets occur from late afternoon to evening; local time is actually morning through afternoon. We could change the time zone before plotting but (1) not everyone who tweets is in the same time zone and (2) not all tweets occur at the same time as the event they describe. So I figure it’s best to leave times in UTC. For days 3-5, two “waves” are discernible which correspond to the morning and afternoon sessions; in particular, one imagines, the keynote talks. |

4. Popular talks

ISMB 2012 used a Twitter hashtag for each talk based on category: keynote (KN), published paper (PP), special session (SS), technology track (TT) and workshop (WK). So for example, tweets might refer to the first keynote as #KN1. We can use this as some measure of talk popularity.

First we extract hashtags from the tweets, count them and sort:

words <- strsplit(ismb$text, " ")

hashtags <- lapply(words, function(x) x[grep("^#", x)])

hashtags <- unlist(hashtags)

hashtags <- tolower(hashtags)

hashtags <- gsub("[^A-Za-z0-9]", "", hashtags)

ht <- as.data.frame(table(hashtags))

ht <- ht[sort.list(ht$Freq, decreasing=F),]

Now we can extract, for example, just the keynotes and plot the count:

kn <- ht[grep("^kn", ht$hashtags),]

kn$hashtags <- factor(kn$hashtags, levels = as.character(kn$hashtags))

print(ggplot(tail(kn)) + geom_bar(aes(hashtags, Freq), fill = "salmon") + coord_flip() + theme_bw() + opts(title = "ISMB 2012 tweets - keynotes"))

|

We have a winner: keynote 3, which was Analysis of transcriptome structure and chromatin landscapes by Barbara Wold. Perhaps the poor coverage of later keynotes can be partially explained by attendees leaving early?

The plots for other categories of talk look similar so I’ve omitted them here in the interests of not being tedious – see the PDF if you’re interested. For the record, most-tweeted talks in the other categories were: - PP44: Toward interoperable bioscience data (Susanna-Assunta Sansone) |

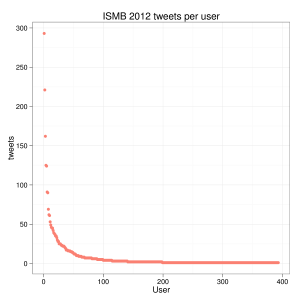

5. Users

First, let’s examine the “long tail”:

users <- as.data.frame(table(ismb$screenName))

colnames(users) <- c("user", "tweets")

users <- users[sort.list(users$tweets, decreasing = T),]

print(ggplot(users) + geom_point(aes(1:nrow(users), tweets), color = "salmon") + theme_bw() + opts(title = "ISMB 2012 tweets per user") + xlab("User"))

393 individuals contributed tweets. However, the median tweets per user = 2 and only 11 individuals contributed > 50 tweets. For posterity, let’s name names:

user Freq

Chris_Evelo 293

genetics_blog 221

tladeras 162

iGenomics 125

WonderMixTape 124

alexishkin 91

bffo 90

spitshine 69

Albertagael 62

andrewsu 61

bioontology 53

|

6. Text mining

6.1 Word frequency

What was everyone talking about? I decided to extract words from tweets by splitting on space, extracting only those elements which contain letters and numbers, removing stop words and then removing “RT” and “MT”. This approach loses words in hashtags and words concatenated with punctuation characters, but it’s quicker than trying to clean up words case-by-case and the losses are very small.

sw <- stopwords("en")

words <- lapply(words, function(x) x[grep("^[A-Za-z0-9]+$", x)])

words <- unlist(words)

words <- tolower(words)

words <- words[-grep("^[rm]t$", words)]

words <- words[!words %in% sw]

words.t <- as.data.frame(table(words))

words.t <- words.t[sort.list(words.t$Freq, decreasing = T),]

print(xtable(head(words.t, 10), caption = "Top 10 words in tweets"), include.rownames = FALSE)

pal2 <- brewer.pal(8, "Dark2")

wordcloud(words.t$words, words.t$Freq, scale = c(8, .2), min.freq = 3, max.words = 200, random.order = FALSE, rot.per = .15, colors = pal2)

For those who like a wordcloud – see image, right. For those who like a top 10 list:

data talk using gene protein slides network people analysis ismb 251 238 132 117 80 73 71 69 68 66 The “big” words in the wordcloud are pretty obvious; it’s the orange group which caught my attention. In particular: integration, networks, wikipedia and galaxy. Your response may be different which, of course, is all part of the subjective fun with wordclouds. |

6.2 Sentiment analysis

I wondered whether to even include this section but…I thought it might be fun to try out the sentiment package. Even if (1) I think sentiment analysis is, on the whole, complete rubbish and (2) tweets from scientific meetings are hardly likely to be very emotional. Bear in mind that at this point, I no longer know what I’m doing. Anyway, let’s start with classification into positive, negative or neutral:

po <- classify_polarity(ismb$text, algorithm = "bayes") print(xtable(table(po[, "BEST_FIT"]), caption = "Tweet polarity")) # negative neutral positive # 573 197 2392

Wow – tweets from ISMB were largely (76%) “positive”! What does that mean, exactly? Well, here’s a positive tweet:

“Often forgotten: CS Optimizing (network) models only makes sense when you keep the model simple to not overfit #ISMB #netbiosiig”

What’s positive about that? Optimizing? Simple? Here’s a negative tweet:

“Chris Sander in Network Biology SIG and how translational medicine: tries to explain how complicated it all is in cancer biology #ISMB”

Complicated? Cancer? Who knows. How about classification into one of 6 emotions:

em <- classify_emotion(ismb$text, algorithm = "bayes") print(xtable(table(em[, "BEST_FIT"]), caption = "Tweet emotion")) # anger disgust fear joy sadness surprise # 24 4 11 149 44 37

ISMB was, clearly, a joyous occasion. Let’s look at a joyous tweet:

“Hooray! First #comicsans talk of the conference. Didn’t take long. It’s like an old friend that refuses to die. #ismb”

That’s my issue with sentiment analysis, right there. What people say and what they mean are often quite different things. I assume that people are applying machine learning to irony and sarcasm.

Summary

It’s difficult to compare coverage with previous ISMB meetings (2008-2011), since those meetings used FriendFeed for microblogging and I have not looked at Twitter coverage in previous years. My personal opinion is that a FriendFeed-like system is better suited to conference coverage due to (1) its less “haphazard” nature (what happens when people use hashtags incorrectly?); (2) longer-form comments and (3) threaded discussion. Unfortunately, FriendFeed has become equally non-viable as an archive due to lack of ongoing support and gradual decline in performance*.

As I mentioned in a previous post, it’s fortunate that I ran searchTwitter() on the day after ISMB closed, because ISMB-related tweets have since, effectively, vanished. This is a concern when using Twitter for conference coverage; it’s not an effective archive.

I’d say that Twitter coverage of ISMB 2012 was quite extensive, with at least 393 contributors and > 3000 tweets. There are undoubtedly more of both than were captured in this study, which focuses on days 1-5 of the meeting itself. The system of hashtags for each talk was reasonably successful and allowed capture of sufficient tweets for some analysis of talk popularity.

Perhaps, at the end of the day, Twitter coverage of conferences is as much about “capturing the moment” as it is about describing the content of talks.

* Not to mention idiots like myself who might destroy much of the archive by deleting their accounts. I remind you that I did, at least, archive all 2008-2011 ISMB data here.

Filed under: bioinformatics, meetings, R, statistics Tagged: ismb, ismb2012

R-bloggers.com offers daily e-mail updates about R news and tutorials about learning R and many other topics. Click here if you're looking to post or find an R/data-science job.

Want to share your content on R-bloggers? click here if you have a blog, or here if you don't.