City Size and SUHI

Want to share your content on R-bloggers? click here if you have a blog, or here if you don't.

In the course of putting together data for my kriging project with the CRN stations, I got another idea related to a small but potentially important corner of the concerns over UHI in the global temperature index. For clarity I suppose I should make it clear that my position is that the UHI bias is probably low, less than .1C decade. That’s basically what Zeke and I found in our AGU poster work ( with help from Nick Stokes, Matt Menne and Claude Williams ). Nevertheless some people persist in claiming that the bias is bigger and further than the bias can even be found in small cities and towns. There isn’t much peer reviewed science to back up this claim, but there are a few “studies” here and there looking at the problem. Here is short incomplete bibliography. Torok 2001

http://www.warwickhughes.com/climate/seozuhi.htm

; Spenser

http://www.drroyspencer.com/2012/04/regional-u-s-population-adjustments-to-surface-temperatures-since-1973-still-little-warming/

; Warwick Hughes .

http://www.warwickhughes.com/blog/?p=575

;

http://solberg.snr.missouri.edu/gcc/heatisland09.pdf

;

https://ams.confex.com/ams/Annual2006/techprogram/paper_100595.htm

; Not exactly what I would call compelling arguments, but rather some interesting observations. One reason this matters relates to the definitions of “urban” and “rural” that we use for studying the possibility of bias in the global temperature record. The argument, I have found, usually proceeds like this. The records of UHI for a large city such as Tokyo, Phoenix, or New York are shown and one might see figures for UHI that are 2,3 or even 9C. The reasonable inference from this is that these types of bias may be in the global temperature record. For a bit of background have a look at this

http://www.nasa.gov/topics/earth/features/heat-island-sprawl.html

Dr. Imhoff and his team do some great work and much of it has inspired me to dig a little deeper into the issue. Results like Imhoff’s on large cities create some concern that this UHI bias may be in the record. There are a few things we need to note. The UHI measured in these studies is typically SUHI, that is, the surface urban heat island, while thermometers measure the air temperature 2 meters above this surface. If SUHI were simply related to the air temperature above the surface, then this would not be a issue, however, air temperature is not simply related to the surface temperature although it can explain a good portion of the variance. The second issue with satellite measures of SUHI is that they are biased to capture the worst case. SUHI and UHI are modulated by the synoptic weather conditions. So, for example, on cloudy days or rainy days the heat islands that develop tend to be smaller than those on sunny days. Sensing the Land surface temperature requires a cloud free day. Hence the satellite measures will be biased high, or rather they will tend to capture the worst SUHI days. This approach is fine for understanding the human health concerns related to UHI and heat waves–another project I am working on, but one should not be surprised to find that even large cities don’t see 9C heat islands every day of the year. SUHI and UHI vary by weather and by season and by location. The other thing to note about Imhoff’s study is that it tended to focus on large cities. A comprehensive look at large cities was conducted by Peng who looked at 419 cities around the world

http://cybele.bu.edu/download/manuscripts/peng-uhi-est-2012.pdf

. Some interesting findings that tend to confirm some of the things I’ve thought. First, if you look at annual figures for SUHI ( or UHI ) you will come up with numbers typically less than 3C and second there appears to be a threshold to the bias at the high end. Peng argues, for example, that for these large cities that SUHI is insensitive to population density. Since these are all cities over 1 million it might be more precise to say that there is a threshold effect above certain densities. That’s consistent with what Oke already understood in 1973 when he fit maximum UHI with a log curve. Imhoff, appears to have found something similar in his studies focusing on impervious area as opposed to population density. In his study of 38 large US cities he found a similar log type relationship with the impervious area of a city. That single variable explains 71% of the variance in SUHI.  and

and  There are some interesting constrasts between Peng and Imhoff’s work that will take this post in a different direction that center around finding different driver for daytime SUHI and nighttime SUHI, but I’m going to focus on the rather small number of data points for cities less than 10 sq km in size. The goal for this project is to focus on small city SUHI using Imhoff’s approach and then perhaps Peng’s approach. My goal at the outset was to get something on the order of 100 to 500 small cities less than 10 sqkm in size. Along the way we will also see the limitations of some datasets for these types of studies. In due course we will see the limitations of using Grump population data ( Like Spenser uses ) for small areas and low population areas and we will see some difficulties in using Modis Urban. The Modis limitation is something I’ve struggled with before specifically in our AGU poster and then later in the Berkeley earth UHI paper. There were two basic issues. First, the Modis calibration was done on cities of 100K people or more. Given the size of the Modis pixel it is likely ( as one can see plowing through thousands of small cities) that Modis will miss small urban/town areas and identify areas as rural when in fact they are “small towns” or villages. The other issue with Modis that no one has pointed out is that I think some northern latitudes have snow cover where there are cities, so that Alaska, for example, is mis represented as more rural than it actually is. Checking the dates for when the Modis images were taken provides some confirmation of this. It appears this wasn’t caught in the calibration testing since all the cities used for calibration are at latitudes below 50 ( As I recall, I need to recheck all of this!) There are also some software goals in this project. There is a new Modis package in R that I am eager to use and some landscape statistic packages that I’ve always wanted to play with, so it’s a small bit of new science to learn and a lot of software fun. Let’s get started! Here is a brief synopsis of Imhoff’s approach ( Peng is a bit different but let’s save that till later ). A) identify the urban areas you want to study. B) identify the biome C) identify the rural area for comparison D) eliminate confounding variables E) process the Modis LST data for various time periods and seasons. F) regressions Along the way, we will learn something about the limitations of various datasets. A) Finding small urban areas. To find small urban/ small town areas I employed US census data. After banging my head against the wall with census block shapefiles, the collections by state are huge, I took a different path. I downloaded the gazetter files:

There are some interesting constrasts between Peng and Imhoff’s work that will take this post in a different direction that center around finding different driver for daytime SUHI and nighttime SUHI, but I’m going to focus on the rather small number of data points for cities less than 10 sq km in size. The goal for this project is to focus on small city SUHI using Imhoff’s approach and then perhaps Peng’s approach. My goal at the outset was to get something on the order of 100 to 500 small cities less than 10 sqkm in size. Along the way we will also see the limitations of some datasets for these types of studies. In due course we will see the limitations of using Grump population data ( Like Spenser uses ) for small areas and low population areas and we will see some difficulties in using Modis Urban. The Modis limitation is something I’ve struggled with before specifically in our AGU poster and then later in the Berkeley earth UHI paper. There were two basic issues. First, the Modis calibration was done on cities of 100K people or more. Given the size of the Modis pixel it is likely ( as one can see plowing through thousands of small cities) that Modis will miss small urban/town areas and identify areas as rural when in fact they are “small towns” or villages. The other issue with Modis that no one has pointed out is that I think some northern latitudes have snow cover where there are cities, so that Alaska, for example, is mis represented as more rural than it actually is. Checking the dates for when the Modis images were taken provides some confirmation of this. It appears this wasn’t caught in the calibration testing since all the cities used for calibration are at latitudes below 50 ( As I recall, I need to recheck all of this!) There are also some software goals in this project. There is a new Modis package in R that I am eager to use and some landscape statistic packages that I’ve always wanted to play with, so it’s a small bit of new science to learn and a lot of software fun. Let’s get started! Here is a brief synopsis of Imhoff’s approach ( Peng is a bit different but let’s save that till later ). A) identify the urban areas you want to study. B) identify the biome C) identify the rural area for comparison D) eliminate confounding variables E) process the Modis LST data for various time periods and seasons. F) regressions Along the way, we will learn something about the limitations of various datasets. A) Finding small urban areas. To find small urban/ small town areas I employed US census data. After banging my head against the wall with census block shapefiles, the collections by state are huge, I took a different path. I downloaded the gazetter files:

http://www.census.gov/geo/www/gazetteer/gazetteer2010.html

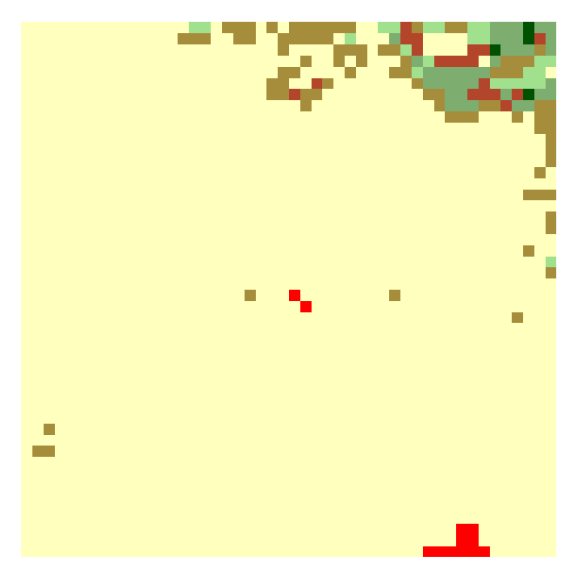

. Here I took the “Places” file which is basically a flat file of places in the US: cities, towns and CDPs or census designated Places. For now I’m working with 2010 data which is read in nicely by R. Later I’ll update with 2000 data which is not so easily read in. placesFile <- “G:\\USCensus\\Gaz_places_national.txt” Places <- read.delim(placesFile, stringsAsFactors = FALSE) The file contains all the information I need to conduct a quick search for areas that are small and have people. I also know that when I return to the census shapefiles for these areas I’ll have an easier job of finding what I want. Next I impose some limits on the areas I will be looking at. colnames(Places)[13]<-”Lat” colnames(Places)[14]<-”Lon” Places <- Places[Places$Lat < 50 & Places$Lat > 22 & Places$Lon > -130,] Places <- Places[Places$ALAND <= 10000000,] Places <- Places[Places$POP10 < 2500,] Places <- Places[Places$AWATER < 25000,] After changing the names of Lat and lon columns I limit my area of interest to CONUS. I do that for several reasons. I plan on working with NLCD landcover 2006 and Alaska and Hawaii are missing from that. Second, I have some concerns about Modis Landcover (urban) in Alaska. I may return to this selection criteria. Next I use the ALAND metric provided by the US census to select only those areas with less than 10Million sq meters of area or 10sq km. This area is the area of the polygon that defines that area for the census bureau. Again, I’m only using this to narrow a search I will be doing in the satellite data. Next, I limit the population size I am looking at. The Census data will contain many small physical areas that are located within huge urban sprawls and what we are looking for is small cities that are surrounded by rural or un populated areas. Lastly, I limit the amount of water than can be found within the bounding polygon. With this criteria we get a huge number of sites to look at on the order of 12,000. Plotting these and looking at them on google earth is my typical approach just to get an idea of potential problems any automated selection process will face.  The site statistics are as follows: The average population is 600, average number of houses is 275 amount of land in the average area is 2.5m sq meters and the 75% of the sites have less than 100 sq meters of water. After reviewing plots of some of these areas it was clear that I was still not finding what I was looking for so to winnow this collection more I turned to another dataset of Impervious surface, the global 1km dataset. Moving from a 30 meter dataset to a kilometer dataset makes the search faster.For the final look at things we will revert back to the 30 meter data, but first we need to find a good selection of small cities that are surrounded by rural environment. I used two versions of the data the standard dataset which contains Isa values from 0 to 100 ( with -1 for water) and a reformated version of that file where 0 represents no Isa and 1-100 is recoded as 1. This allows me so simply sum to find the number of 1km cells that have any Isa. Places <- cbind(Places, Isa= extract(Isa,cbind(Places$Lon,Places$Lat))) Places <- Places[Places$Isa >= 0,] Places <- cbind(Places, Isa10sqkm = extract(IsaB, cbind(Places$Lon,Places$Lat), buffer = 3000, fun = sum, na.rm= TRUE)) Places <- Places[Places$Isa10sqkm > 0,] Places <- Places[Places$Isa10sqkm <= 36,] The processing above removes and location where water is at the center of the site location and removes those sites that are embedded in areas of other cities. At this stage we are left with 7445 sites which I then review by looking at plots while counting the total number of 1km Isa cells in a 1/2 degree zone about the site location. for( i in 1:nrow(Places)){ e <- getExtent(IsaB,.5,c(Places$Lon[i],Places$Lat[i])) patch<- crop(IsaB,e) icount <- count(patch,1) Places$IsaCount[i]<-icount fname <- paste(i,”Patch.png”,sep=”_”) fname <- file.path(“Patches”,fname,fsep=.Platform$file.sep) png(fname, 785,785) plot(patch, main= icount ) dev.off() print(i/nrow(Places)) } That code creates 7445 plots of all the potential locations and counts the number of cells in a 1/2 degree zone. I then review those plots. Here is small sample

The site statistics are as follows: The average population is 600, average number of houses is 275 amount of land in the average area is 2.5m sq meters and the 75% of the sites have less than 100 sq meters of water. After reviewing plots of some of these areas it was clear that I was still not finding what I was looking for so to winnow this collection more I turned to another dataset of Impervious surface, the global 1km dataset. Moving from a 30 meter dataset to a kilometer dataset makes the search faster.For the final look at things we will revert back to the 30 meter data, but first we need to find a good selection of small cities that are surrounded by rural environment. I used two versions of the data the standard dataset which contains Isa values from 0 to 100 ( with -1 for water) and a reformated version of that file where 0 represents no Isa and 1-100 is recoded as 1. This allows me so simply sum to find the number of 1km cells that have any Isa. Places <- cbind(Places, Isa= extract(Isa,cbind(Places$Lon,Places$Lat))) Places <- Places[Places$Isa >= 0,] Places <- cbind(Places, Isa10sqkm = extract(IsaB, cbind(Places$Lon,Places$Lat), buffer = 3000, fun = sum, na.rm= TRUE)) Places <- Places[Places$Isa10sqkm > 0,] Places <- Places[Places$Isa10sqkm <= 36,] The processing above removes and location where water is at the center of the site location and removes those sites that are embedded in areas of other cities. At this stage we are left with 7445 sites which I then review by looking at plots while counting the total number of 1km Isa cells in a 1/2 degree zone about the site location. for( i in 1:nrow(Places)){ e <- getExtent(IsaB,.5,c(Places$Lon[i],Places$Lat[i])) patch<- crop(IsaB,e) icount <- count(patch,1) Places$IsaCount[i]<-icount fname <- paste(i,”Patch.png”,sep=”_”) fname <- file.path(“Patches”,fname,fsep=.Platform$file.sep) png(fname, 785,785) plot(patch, main= icount ) dev.off() print(i/nrow(Places)) } That code creates 7445 plots of all the potential locations and counts the number of cells in a 1/2 degree zone. I then review those plots. Here is small sample

The review of the 7000 plots suggested that if I wanted to find examples where there was a small amount of Isa in the center of the plot and “non isa” surrounding it that a limit of 1500 total Isa cells in the 1/2 degree zone would go a considerable way toward that goal. In addition, at this stage I winnowed the sites by Imhoff’s biome criteria. Since the biome that the urban site is embedded in drives the differential in temperature we want to make sure that a city is not in an area where the biome changes. The biome within 1/2 a degree of the site is check to ensure that a city is not in an area where biomes change. These two cuts through the data reduce the number of sites to examine to roughly 2400. At this stage the underlying statistics of the area have not changed with the average population still be around 600 and the average sq km being 2.5. In addition, we now have tagged every site with its biome. The next step was plot all 2400 sites using 30 meter NLCD impervious surface data. The following gives you an idea of the detail available.

The review of the 7000 plots suggested that if I wanted to find examples where there was a small amount of Isa in the center of the plot and “non isa” surrounding it that a limit of 1500 total Isa cells in the 1/2 degree zone would go a considerable way toward that goal. In addition, at this stage I winnowed the sites by Imhoff’s biome criteria. Since the biome that the urban site is embedded in drives the differential in temperature we want to make sure that a city is not in an area where the biome changes. The biome within 1/2 a degree of the site is check to ensure that a city is not in an area where biomes change. These two cuts through the data reduce the number of sites to examine to roughly 2400. At this stage the underlying statistics of the area have not changed with the average population still be around 600 and the average sq km being 2.5. In addition, we now have tagged every site with its biome. The next step was plot all 2400 sites using 30 meter NLCD impervious surface data. The following gives you an idea of the detail available.

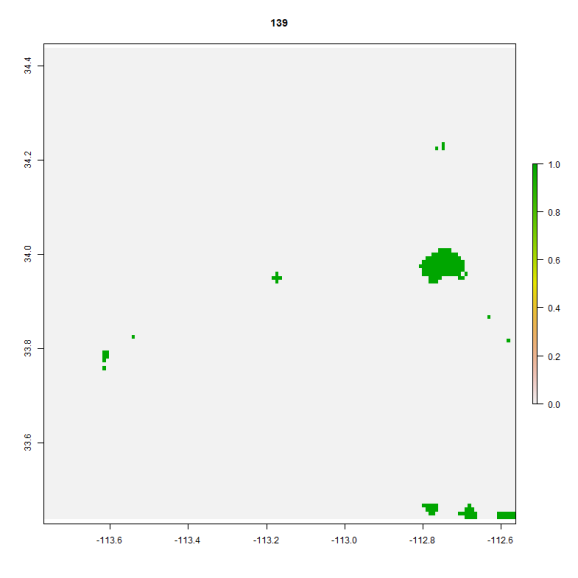

With these 2400 sites I figure I have a good chance of finding a fairly large sample of small cities that I can perform the “Imhoff” style analysis on. I will say that looking through thousands of maps for small urban areas gives me a good idea of some of the difficulties ahead. It always helps to get really down and dirty with the data. Reviewing the Imhoff approach one should note that he defines Urban areas by NLCD Isa with various zones such as an urban core at 75-100% Isa and then surounding zones at various levels. His cut off is 25% Isa. One should also note that he checks this against Modis Urban. That check is the check I turn to next. Why Modis Urban? The answer lies in the bowels of MODIS LST. Land Surface temperature data is estimated using a variety of MODIS products. On critical unknown is emmissivity. That is estimated from Modis Land cover. Simply put, if Modis Land cover doesnt think a pixel is urban it will not apply the correct emissivity and the temperature will not be estimated correctly. My next step then was to use Modis Land cover to winnow the 2400 sites down to those where Modis thought the small urban area was ”Modis Urban”. In this next step I add a few more constraints, basically, steps to ensure that the small urban center is surrounded only by non urban modis pixels. That step reduces the dataset down to 149 stations, mapped below

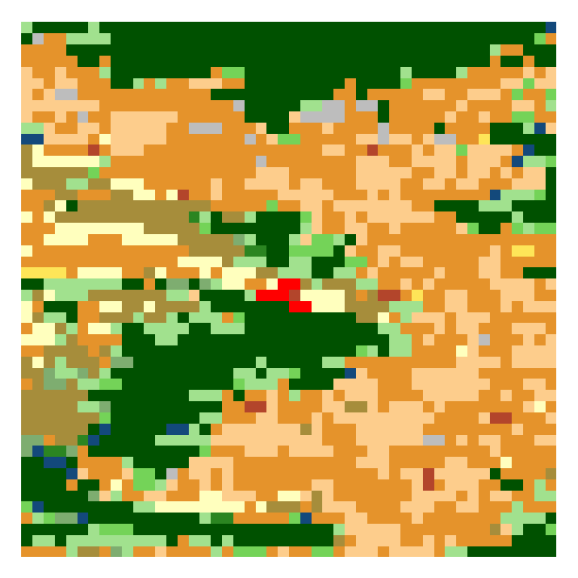

With these 2400 sites I figure I have a good chance of finding a fairly large sample of small cities that I can perform the “Imhoff” style analysis on. I will say that looking through thousands of maps for small urban areas gives me a good idea of some of the difficulties ahead. It always helps to get really down and dirty with the data. Reviewing the Imhoff approach one should note that he defines Urban areas by NLCD Isa with various zones such as an urban core at 75-100% Isa and then surounding zones at various levels. His cut off is 25% Isa. One should also note that he checks this against Modis Urban. That check is the check I turn to next. Why Modis Urban? The answer lies in the bowels of MODIS LST. Land Surface temperature data is estimated using a variety of MODIS products. On critical unknown is emmissivity. That is estimated from Modis Land cover. Simply put, if Modis Land cover doesnt think a pixel is urban it will not apply the correct emissivity and the temperature will not be estimated correctly. My next step then was to use Modis Land cover to winnow the 2400 sites down to those where Modis thought the small urban area was ”Modis Urban”. In this next step I add a few more constraints, basically, steps to ensure that the small urban center is surrounded only by non urban modis pixels. That step reduces the dataset down to 149 stations, mapped below  A few things to note. There are some sites that are located near water which should make an interesting bit of analysis. As I recall recall Imhoff has special handling for water pixels. There are a few more restrictions that Imhoff employs for “rural” pixels that will probably reduce this set even more and then there are pixels that need to be removed because of altitude differences, but with 150 sites to work with I think I have a manageable number. At this stage I wanted to also check out the land cover surrounding a station. Imhoff used Biomes which really dont give a current day view of the land cover rather they give a historical view of things. I may tweak that part of the analysis. Here are some sample of land cover around the sites. Sites are coded in red..I’m still playing around with ‘rasterVis’ to get legends about land cover to work out

A few things to note. There are some sites that are located near water which should make an interesting bit of analysis. As I recall recall Imhoff has special handling for water pixels. There are a few more restrictions that Imhoff employs for “rural” pixels that will probably reduce this set even more and then there are pixels that need to be removed because of altitude differences, but with 150 sites to work with I think I have a manageable number. At this stage I wanted to also check out the land cover surrounding a station. Imhoff used Biomes which really dont give a current day view of the land cover rather they give a historical view of things. I may tweak that part of the analysis. Here are some sample of land cover around the sites. Sites are coded in red..I’m still playing around with ‘rasterVis’ to get legends about land cover to work out

The site stats now look like this: Population ranges from 141 to 2417 with a mean of 999 and a median of 879. The size of the community according to cadastral boundaries is from rounghly .5 sqkm to 10 sq km with a mean of around 3km. and the population density according to census figures runs from 36 people per sq km to 1200.

R-bloggers.com offers daily e-mail updates about R news and tutorials about learning R and many other topics. Click here if you're looking to post or find an R/data-science job.

Want to share your content on R-bloggers? click here if you have a blog, or here if you don't.